We hear anecdotally that trying to figure out which AI contract review tool is the right one to purchase is a very difficult process. There are a lot of companies in the category, they all say the same things, the category is noisy. “We spent upwards of two years evaluating these tools,” one customer told us. “It was a grueling process.”

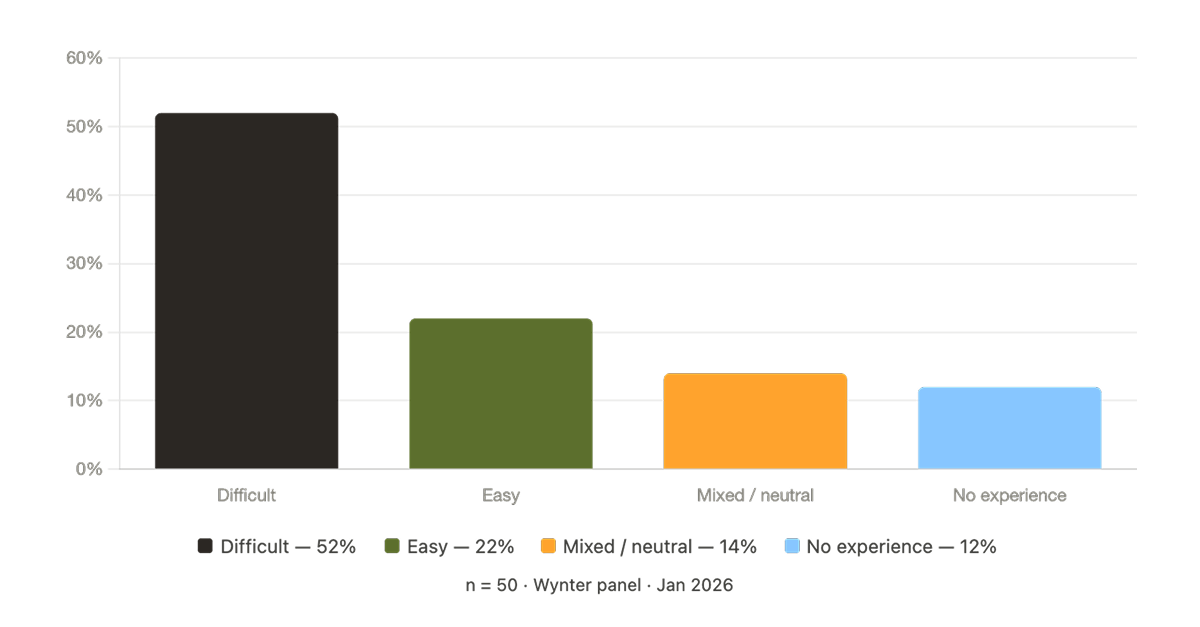

We wanted to know if the data supported what we’ve been hearing, so we surveyed 50 legal leaders across enterprise and mid-market companies to find out what it’s like to evaluate AI legal tools.

More than half said it’s difficult to determine which tool is the right one. Only one in five said it was easy.

Lawyers are certainly using AI; 87% of general counsels say that their teams use generative AI for all sorts of legal tasks, like research, document drafting, and contract analysis. What has happened in the last several years is that AI’s increasing capabilities have led the software category of AI-powered legal tools has grown exponentially; in fact, faster than buyers’ ability to assess it.

In-house counsel, legal ops leaders, and procurement teams told us they are being asked to make significant purchasing decisions in a category that's crowded, noisy, and in some cases, hard to trust. And the industry isn't making it any easier.

The responses from the 52% of lawyers who found evaluating tools difficult clustered around five distinct themes.

The frustrations they’re feeling are clear; these are all signs of a noisy market making claims that are hard to verify.

The most common complaint, cited by nearly a quarter of respondents, was that it’s difficult to differentiate one legal tool from another.

"There are lots of overlapping claims but vendors are at different stages of maturity, accuracy and security," said one general counsel at a mid-market pharma company. "So you have to pilot so you can compare tools."

Another respondent, a senior counsel, put it more bluntly: "After a while, I get AI vendor merge where they all seem to offer the same software functions."

When every vendor claims to do contract review, redlining, playbook management, and AI-powered analysis, buyers lose the ability to distinguish between them, and that’s not a way to make great business decisions.

Another common concern was the difficulty of assessing these tools’ accuracy.

For legal professionals, this isn’t an academic concern. The stakes are high. Nobody wants to get sued because an AI hallucinated or came up with the wrong answer. So when legal teams evaluate a tool, they want proof it actually works. But proof is hard to get.

"The top two things I'm worried about — hallucination and accurate representation of the law — seem nearly impossible to evaluate without redoing all of the work manually," said one senior counsel. "I'm not sure how to better investigate the accuracy."

Another respondent described numerous variables that make accuracy almost impossible to pin down: "The accuracy of AI is hard to define. Results vary dramatically based on prompt quality, document structure, data cleanliness, user expertise."

Sixteen percent of respondents identified the gap between a vendor demo and real-world performance as a primary frustration. The pattern was consistent: tools look like they work well in controlled conditions but disappoint in actual use..

"When you see a demo, it is showing the product in its best light, but when you use it, it may not be as effective," said one corporate counsel responsible for AI technology procurement at a large manufacturer.

A privacy and cyber liability attorney framed it as a structural problem: "Most companies you really need a proof of concept to actually evaluate, because their usefulness in a demo or on a website just doesn't show how they would work for your use case."

This gap isn't unique to legal AI. It’s tough to take a category where accuracy and workflow fit are non-negotiable, and where the cost of a bad decision is not just measured in time or money, but in serious negative consequences for the company.

Fourteen percent of respondents called out vendor overpromising as an obstacle to good evaluation. Examples cited included products pitched as production-ready that are still in development, RFP responses full of buzzwords that don't map to actual features, or products designed by people who don't understand legal workflows.

"Many overused buzzwords in the RFP responses and some proposed products/solutions were still in the ideation phase," said one senior legal counsel currently running a formal RFP process for their organization.

A lead counsel at an enterprise SaaS company noted: "Many of the features they show are either basic and should be in any type of tracking tool, or are not something attorneys need. You can tell which were designed by attorneys."

Legal professionals’ work is specific and well-defined. That’s why specialized tools that reflect their workflow are so important. AI tools have to suit how attorneys already work, not force lawyers to fit their work into new directions.

There were several key themes that arose from the 22% of legal professionals that found assessing AI tools easy. During the evaluation process, they had clear decision-making criteria, direct access to test the tool on their actual work, and enough familiarity with AI to know what questions to ask.

"I find it pretty easy to evaluate AI vendors because it comes down to whether their product works well or not," said one patent attorney. "Many tools don't seem to understand the actual needs of patent attorneys or understand what our workflow is really like."

The vendors that make evaluation easier are the ones that provide real evidence — in benchmarking data, real-world trials, and customer references — that their products work in conditions that resemble the buyers’ own.

Legal professionals have an aversion to puffery. They’re not fooled by slick demos and marketing fluff. They want to know whether AI tools will do the specific work they’re tasked to do.

The vendors who earn their trust will be the ones who make evaluation easy but by providing transparent, evidence-based information that legal professionals can use to make good decisions.

__________________

This post draws on a survey of 50 in-house legal professionals conducted via the Wynter panel in January 2026. Respondents ranged from associate attorneys to General Counsels across enterprise and mid-market companies.

Schedule a demo today.